Superuser to the rescue...

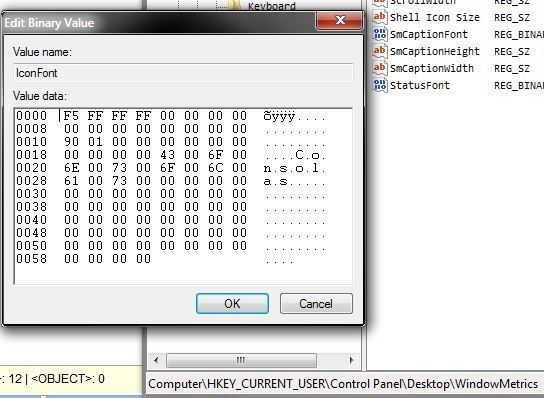

Example:

F4 FF FF FF 00 00 00 00 random bytes but probably control characters

00 00 00 00 00 00 00 00

90 01 00 00 00 00 00 01

00 00 00 00 4D 00 69 00 main string starts here

63 00 72 00 6F 00 73 00

.........[...the structure of this binary string.] It is in the format of LOGFONT, (

https://msdn.microsoft.com/en-us/library/windows/desktop/dd145037%28v=vs.85%29.aspx) which is divided into 14 parts, including the first 20 bytes as 4 long integers in little endians, the next 8 bytes as bytes, and a string.

In my example, F4 FF FF FF means the height is FFFFFFF4 in hex (long int), which is -11 in decimal. Converting it into pixels would be 8.

The next 00 00 00 00 means the width. Setting it to 0 would make it automatically calculated.

The next 8 bytes correspond to lfEscapement and lfOrientation which don't really matter.

The next 4 bytes 90 01 00 00 is 190 (400 in decimal) is the weight. 400 correspond to FW_NORMAL.

The next 3 bytes are lfItalic, lfUnderline, and lfStrikeOut. Pretty self-explanatory.

The next byte would be lfCharSet. It states the character set to be used according to this enum. 0x01 would be DEFAULT_CHARSET.

The next 4 bytes are for something else: lfOutPrecision, lfClipPrecision, lfQuality, lfPitchAndFamily.

Then comes the main part. For the next 64 bytes it is a string of the font name you want to use. Each character must be separated by a 00 byte.

In conclusion, to change the font of the each part of the system UI, modify the binary entry according to the structure above.